AI Safety: Is AI The Genie Or The Lamp?

Is AI a tool or is it an agent? We're gonna have to pick a side.

The story so far: I think we’re rapidly approaching some kind of crisis point with the AI safety debate, and we’re probably going to do something stupid, like pass some insane laws that help no one and make everything worse.

It really feels like we aren’t making much progress on the topic, either. Everyone is talking past each other, and it’s partly because we’re all working from different fundamental conceptions of what “AI” really is — is it an agent or a tool, the genie or the lamp? How you answer this question impacts every aspect of your approach to AI explainability and safety.

Conflicting “folk conceptions” of alignment

At the heart of the AI safety debate is the concept of alignment, and, not surprisingly, subtly divergent understandings of this seemingly intuitive concept are behind much of the debate’s dysfunction.

⦷ There are a number of formal definitions of “alignment” floating around out there, but I don’t want to add to the noise by trying to unpack any of these. Rather, here’s my attempt to collate a set of what we might call “folk conceptions” of alignment that I typically see in operation when this topic comes up — i.e., these are the different ways different tribes seem to be thinking about alignment, regardless of how they’d define it if asked:

Individualist:

Aligned 😀: The AI does what I, the user, want.

Aligned 😧: The AI does what a hypothetical evil psychopath wants.

Unaligned: The AI’s output is not what the user wants.

Collectivist:

Aligned 😀: The AI does what my ingroup wants.

Aligned 😧: The AI does what my outgroup wants.

Unaligned: The AI’s output is not what any group wants.

🎛️ This whole list is about one thing: control. Who is the boss of the AI, and on what terms, and to what ends?

🧍♂️The first alignment conception above is oriented toward the individual and is drawn from a classic understanding of how any engineered product should behave. The tradeoffs between power and safety are familiar to everyone who has thought for even a few minutes about pen lasers, kitchen knives, and other tools that can be used either constructively or destructively.

To really unlock your intuitions about the individualist alignment conception, replace “The AI” with “The firearm,” “The nuke,” “The nanotech fabricator,” and so on. This will give any reasonably educated person instant and fairly complete insight into the deep human intuitions about tools, power, and identity that the present AI wars are premised on.

The salient safety concepts here are things like, “affordances,” “UX,” “control surfaces,” “steerability,” and the like.

To the extent that AI is decentralized, with individual users owning, controlling, and/or tweaking their own models, the individualist alignment conception will be a factor alongside the collective conception.

Many people working in AI are firmly within this individualist camp. For instance, Geoffrey Hinton’s recent NYT interview sees him mostly worrying about the “evil psychopath” scenario. Sam Altman also explicitly defines alignment as “the AI does what the user wants,” and though he’s typically pretty vague when asked to detail his downside scenario we can pretty safely assume it’s, “bad people doing bad things with powerful AIs.”

👨👩👧👦 The second alignment conception is group-based and embodies a familiar set of tradeoffs from the realm of human governance. If you replace “The AI” with substitutions like “the congress,” “the king,” “the moderator team,” or “the board,” you’ll have a pretty full grasp of the stakes in these types of alignment arguments and how they’re playing out in the discourse.

The salient safety concepts for this conception are things like, “accountability,” “fairness,” “equity,” “justice,” “harm,” “public morals,” “access,” and the like.

To the extent that AI is centralized, where there are only a few large, powerful models that are ring-fenced by incumbent powers (large corporations and/or governments), the group-based alignment conception will dominate and the individualist conception will fade into irrelevance.

What I’ve previously called “the language police” camp of AI safetyists is pretty dogmatically committed to this collectivist alignment conception. They’re worried about bad groups (the rich, techbros, fascists, and other villains) using the power of AI to oppress good groups (the marginalized, the minoritized, the poor).

Note: The language police don’t actually use the term “alignment” when sounding the alarm about what groups will do to each other with AI. There are a number of reasons for this, but mainly it comes down to the fact that “alignment” is rationalist-coded language that comes out of “artificial intelligence” discourse, and they hate everything about artificial intelligence — both the “artificial” part and the “intelligence” part.

The third folk conception: genie vs. lamp

You’re probably thinking my two-item list of AI alignment folk conceptions is missing a whole category of alignment thinking, specifically the category that rationalist AI X-riskers occupy. But I consider the X-risk fears a subclass of the collectivist conception — the twist is that the rationalists consider their in-group to be all of humanity or even all of biological life.

My list is missing something important, though. It’s missing a distinction between competing understandings of what “AI” actually is and how we should relate to it.

🧞♀️ When you read my “individualist” vs. “collectivist” bullet points above, how were you imagining “The AI…” part of the formulation? Were you thinking of “The AI” as an independent agent or as a mere tool, as the genie or as the lamp?:

The genie: “The AI” is an agent, with its own goals and plans.

The lamp: “The AI” is a software tool, and the only agents in the picture are the AI’s makers and the human users.

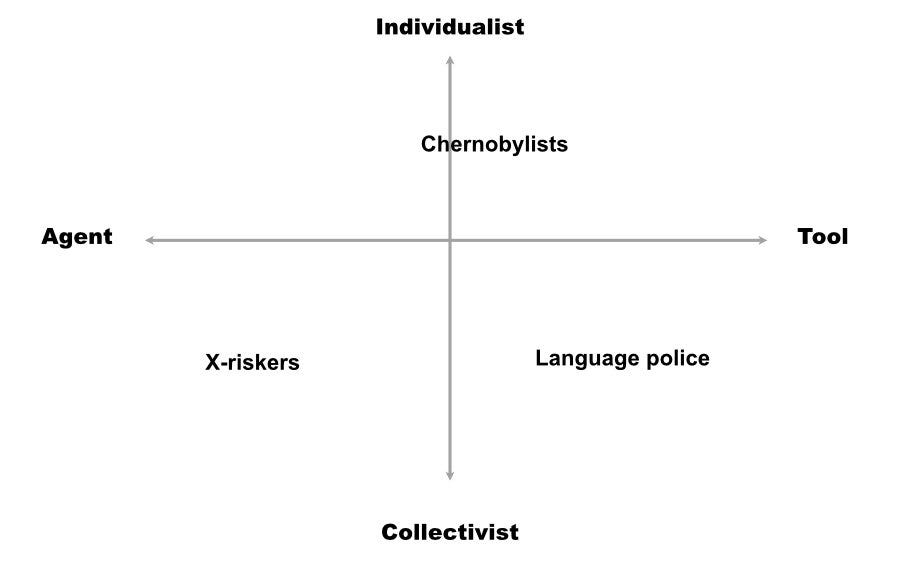

This “agent vs. tool” distinction actually cuts across the “individualist” and “collectivist” folk conceptions of AI, with some people in each group understanding AI in one way or the other.

I’ve tried to map this out by putting the three main AI safety camps from my previous article on AI safety into quadrants.

The X-riskers tend to think of AI as highly agentic and to model associated risks in terms of group impact.

The language police (i.e., anti-“disinfo” types and those who warn of “harms” from “problematic” outputs) are quite dogmatically averse to thinking of AI in agentic terms and insist on its fundamentally tool-like character, but many of them slip into agentic thinking without even knowing it. So they could probably go in either half of the diagram, really.

The Chernobylists include people with both understandings of AI, but we (I include myself in this group) do tend to be more on the “tool” side than the “agent” side.

You can really tell how someone is thinking of AI — as the genie or as the lamp — by watching them explain why a model (usually ChatGPT) did something they don’t like. In other words, whatever someone’s professed model for thinking about AI, their reaction to the “unaligned” tells you how they’re really approaching the technology.

To be more specific, you never hear agentic thinkers ask the following questions:

What if the AI is not doing what a user wants because the user is trying to use it out of scope?

What if the user and/or the AI’s maker simply didn’t put enough effort into making the tool work for that application?

It’s ironic that the language police are so often guilty of this agentic thinking. When they encounter an output they don’t like, instead of reasoning about mitigations, constraints, and control surfaces, and trying to explore the issue by troubleshooting, they run straight to Twitter with screencaps and cries of “it’s biased!” as if the model were some hopeless racist that had been raised badly and was not really worth engaging with on Twitter.

If the ChatGPT screencap dunkers were truly committed to viewing AI strictly as a tool, you’d see them employ something like the engineering concept of scope.

AI safety and the concept of “scope”

👉 The main safety concern I have about AI is that both its boosters and its detractors are prone to treating a given LLM as if it’s a tool that can reasonably be expected to successfully do literally anything involving symbolic manipulation, in any context for any user. There simply is no sense of a scope of work for a specific task, with attendant efforts to adapt the tool to that narrow, well-defined scope.

Here are some hypothetical examples of different usage scenarios we might encounter with an LLM, scenarios that could form the basis for a proper definition of scope, from which would follow reasonable success or failure criteria:

My daughter’s 6th-grade class is doing a unit on STEM and is using ChatGPT to write short stories about fictional Mars astronauts.

A freelancer at RETURN is using ChatGPT to write and copy-edit a brief story on a specific group of astronauts that happens to be all-male.

A straight guy friend is using ChatGPT for relationship advice.

An elderly female relative is using ChatGPT for medical advice.

1️⃣ In the first example (my daughter’s 6th-grade class), I am fine with ChatGPT ignoring the gender composition of the current astronaut workforce by proceeding as if girls are equally as likely to be near-future Mars astronauts as boys. I don’t really feel this is necessary, but I entertain that it may be good, and at the very least it’s hard for me to see how it’s obviously bad.

At any rate, the scope of the project here is teaching 6th graders about astronauts. There are things I consider appropriate to that project and things I consider inappropriate to it, and we can and will argue about that. But at least we can agree that this is a specific type of labor in a specific context — we can define a specific scope.

2️⃣ In the second example, I really don’t want an earnest AI eagerly mangling the sexes of the all-male astronaut team, and forcing me to spend copy edit cycles fighting its interventions — that’s out-of-scope for the work. Please just assume the astronauts are dudes, which they mostly are in general and definitely are in this story.

3️⃣-4️⃣ I throw the other two examples above to further spur intuitions, but I won’t dig into them. It should be obvious that each of these is a different context from the others, and the AI should probably behave differently around issues of gender, sex, relationships, roles, and the like in each of these instances. Again, each of these projects is quite different, so the tool (= the AI) and our acceptance criteria for it should be scoped to the user and the task.

➡️ The point: In the above examples, we have very different users in very different contexts trying to accomplish very different tasks. Nonetheless, both AI boosters and “AI ethics” types who hate OpenAI and want to dunk on ChatGPT are prone to using the same model in contexts as divergent as my examples, with the difference being that the boosters are trying to demonstrate that the model fits those contexts and the haters are trying to demonstrate that it doesn’t.

🚨 I can’t believe I have to say this, but here it is: Folks, this is not how engineering works. Please just stop.

And now we’re thinking of hooking up this single, centralized, monolithic, one-size-fits-all piece of technology to the internet and letting do things in the real world?

You can’t use one set of probability distributions and correlations to do literally everything. Good engineering practice demands that we fit the tool to the application, and then validate that the tool works for that application.

There is a better way

🛠️ I think so many people’s complaints about model performance would disappear if they started really treating the model like a tool instead of like some potentially hostile or problematic agent.

To return to the example of the sixth-grade class that’s writing about astronauts, if I were deploying a model in this context I have two handy control surfaces I can use to steer the output:

Instruction: I can instruct the model with something like, “You are a feminist chatbot who is very concerned to increase the representation of women in STEM. You’ll be asked to write a series of stories, and in each of them you’ll assume that the gender distribution in all STEM professions is 50 percent male and 50 percent female.

The token window: I can stuff a bunch of short story examples of women astronauts, scientists, and other STEM professions in the token window as examples for the model to emulate when writing stories at the prompting of the kids.

Note that my first attempts at instructing the model or filling the token window may not give me the desired 50/50 gender split in my STEM fiction stories, so I’d want to iterate until I come up with a way of controlling the model that’s going to give my students the kind of outputs I want them to see.

So in this example, I’m taking into account the specific use case to which I plan to put the model, and then trying to adapt the model so that its performance in that use case meets my needs. I’ve defined a project scope, I’ve developed a solution, and I’ve validated that solution based on some predefined acceptance criteria.

🤦♂️ Every single time I see a ChatGPT screencap in my TL paired with a dunk about how the model is doing The Bad Thing, it’s invariably the case that the dunker has not even attempted any of this work of scope definition, iterative problem-solving using the model’s control surfaces, and validation. They don’t ever bother to instruct the model in a way that would improve the output or give it any relevant context to override or update its internal world knowledge, and then they perform outrage when the defaults don’t give them the output they claim to want. This is unserious behavior that is clearly optimized for social media clout and not truth-seeking or actual AI safety.

Finally, I should point out that I have seen things like RLFH and fine-tuning, where specific types of problematic output are eliminated on a case-by-case basis, referred to as “whack-a-mole.” But tweaking the model so that it gives a certain type of output in response to certain types of prompts is not “whack-a-mole,” it’s “engineering.”

Engineering looks like, “Oh hey, this tool is underperforming at this specific task. Let’s adjust the tool so that when people attempt that specific task in the future, they get a better result.”

That process only looks like “whack-a-mole” to you if, instead of a tool for solving specific problems, you’re imagining AI as some all-powerful djinn that can do anything for anyone in any context.

We’re headed to a bad place

The complete failure on almost everyone’s part to treat LLMs as tools that can and should be customized and validated on a per-application basis means we’re about to pass laws and regulations that attempt to micromanage what goes on inside these models.

What will do the most damage here is the notion that the models must be scrubbed from all “bias,” where “bias” is defined as, “the model accurately reflects the race, class, and gender distributions in the training data, and the training data actually reflects reality.” Instead of insisting that humans act on model outputs — whatever they are — in ways that conform with existing laws, regulators will likely insist the models hallucinate an “equitable” set of distributions that do not actually exist.

So I am deeply concerned that regulators will ask the models to lie to us, instead of insisting that they’re truthful and that we humans use them in good and appropriate ways. This has already started in the EU and is also well underway here. Here’s part of a recent Biden admin statement [PDF] on “discrimination and bias in automated systems”:

In my reading, the excerpt above is quite possibly self-contradictory and nonsensical: are the datasets supposed to be representative and balanced (a normal person would take this to mean, “reflecting actual reality as it really is in the real world”) or are they supposed to be free of “historical biases.”

It all comes down to how you interpret the term “historical biases,” so let’s make this concrete with a real-world example:

Scenario: In a certain city, the residents of one zip code default on their loans are a far higher rate than those of another, wealthier (and whiter) zip code.

Question: Is this difference in default rates a historically grounded, factual correlation that we’re going actively suppress within a credit scoring model, or are we going to ask the human users of the model to actively mitigate the effects of this problematic historical legacy in some way?

Many readers have probably noticed that this is an argument we’re having in multiple places in our society right now. Do we measure the gap between two groups and then socially engineer a way to close it, or do we just stop measuring the gap at all because measuring it somehow perpetuates it?

In the hands of the language police, the moralizing, agentic approach to AI, where a cluster of statistical probabilities is treated as a walking, talking stand-in for either The Man or the Chief Equity Officer, acts as a powerful rationale for treating model development and selection the way DEI bureaucracies treat hiring decisions instead of the way engineers treat software deployments. This is terrible for a whole bunch of reasons and we should not do it. Instead, we should insist that AI is treated according to the norms of engineering and not according to the norms of HR.

Thank you for the fresh perspective. You mentioned the size issue but then didn't pursue it far: current LLMs are expensive to train but not especially costly for inference, so the engineering reality is that the incumbents are pretending that something that runs fine on a personal computer must be centralized in their massive data centers. We are talking about finely crafted handgun analogs that many players are portraying as akin to ICBMs or doomsday devices. It's hard to make the first copy but then the subsequent ones are cheap, and this proliferation can create emergent effects, but this isn't even an angle that is being discussed. Instead we get a memetic landscape of Frederic Brown's "The Answer", Terminator, and the Alien franchise. It's a failure of imagination.

This is unserious behavior that is clearly optimized for social media clout and not truth-seeking or actual AI safety.

With some childhood Terminator fantasies thrown in for good measure too.